T

tommyhmt

Guest

tommyhmt Asks: pyspark explode out records based on hierarchy

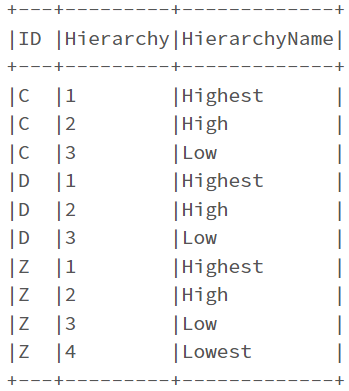

I have the following dataframe:

Basically ID C and D have the same parent which is B, which has a parent of A.

Similarly ID Z has a parent of Y, which has a parent of X, which has a parent of A

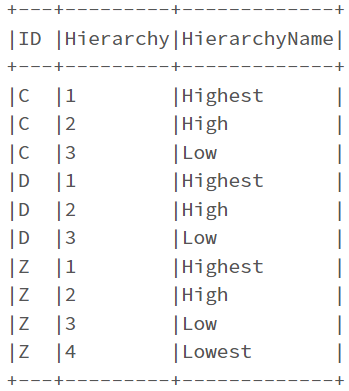

For the records at the lowest level, i.e. those which cannot be the ParentID of any other records (ID C, D and Z in this example), important to note here that it's not always just the record with the largest Hierarchy (which would just be ID Z in this case), how can I turn the dataframe into the following using pyspark?

I have the following dataframe:

Code:

data = [("A",None, 1, 'Highest'),("B","A", 2, 'High'),("C","B", 3, 'Low'),("D","B", 3, 'Low'), ("X","A", 2, 'High'),("Y","X", 3, 'Low'),("Z","Y", 4, 'Lowest')]

df = spark.createDataFrame(data=data, schema = ['ID','ParentID','Hierarchy','HierarchyName'])

df.show(truncate=False)

Basically ID C and D have the same parent which is B, which has a parent of A.

Similarly ID Z has a parent of Y, which has a parent of X, which has a parent of A

For the records at the lowest level, i.e. those which cannot be the ParentID of any other records (ID C, D and Z in this example), important to note here that it's not always just the record with the largest Hierarchy (which would just be ID Z in this case), how can I turn the dataframe into the following using pyspark?

Code:

data = [("C", 1, 'Highest'),("C", 2, 'High'),("C", 3, 'Low'), ("D", 1, 'Highest'),("D", 2, 'High'),("D", 3, 'Low'), ("Z", 1, 'Highest'),("Z", 2, 'High'),("Z", 3, 'Low'),("Z", 4, 'Lowest')]

transformed_df = spark.createDataFrame(data=data, schema = ['ID','Hierarchy', 'HierarchyName'])

transformed_df.show(truncate=False)